Where AI Systems Start to Break

Most AI projects do not fail at the model. They begin to struggle the moment the system around the model starts to take shape.

A team gets early success. A prompt works. A prototype comes together quickly. There is momentum. Then comes the infrastructure.

A model server is introduced to handle inference. A vector database is added for retrieval. Another service is layered in to manage orchestration. What once felt simple begins to stretch across multiple systems. At that point, the problem shifts. It is no longer about generating better outputs. It becomes about managing complexity.

At Ardan Labs, this pattern has shown up again and again while working with teams implementing AI in production. Over the past few months, that observation turned into a deeper question. What if the architecture itself is the constraint?

That question is what led to Kronk AI.

What Kronk AI Is Trying to Fix

Kronk AI is not positioned as just another tool in the ecosystem. It is a response to a pattern that has become normalized too quickly.

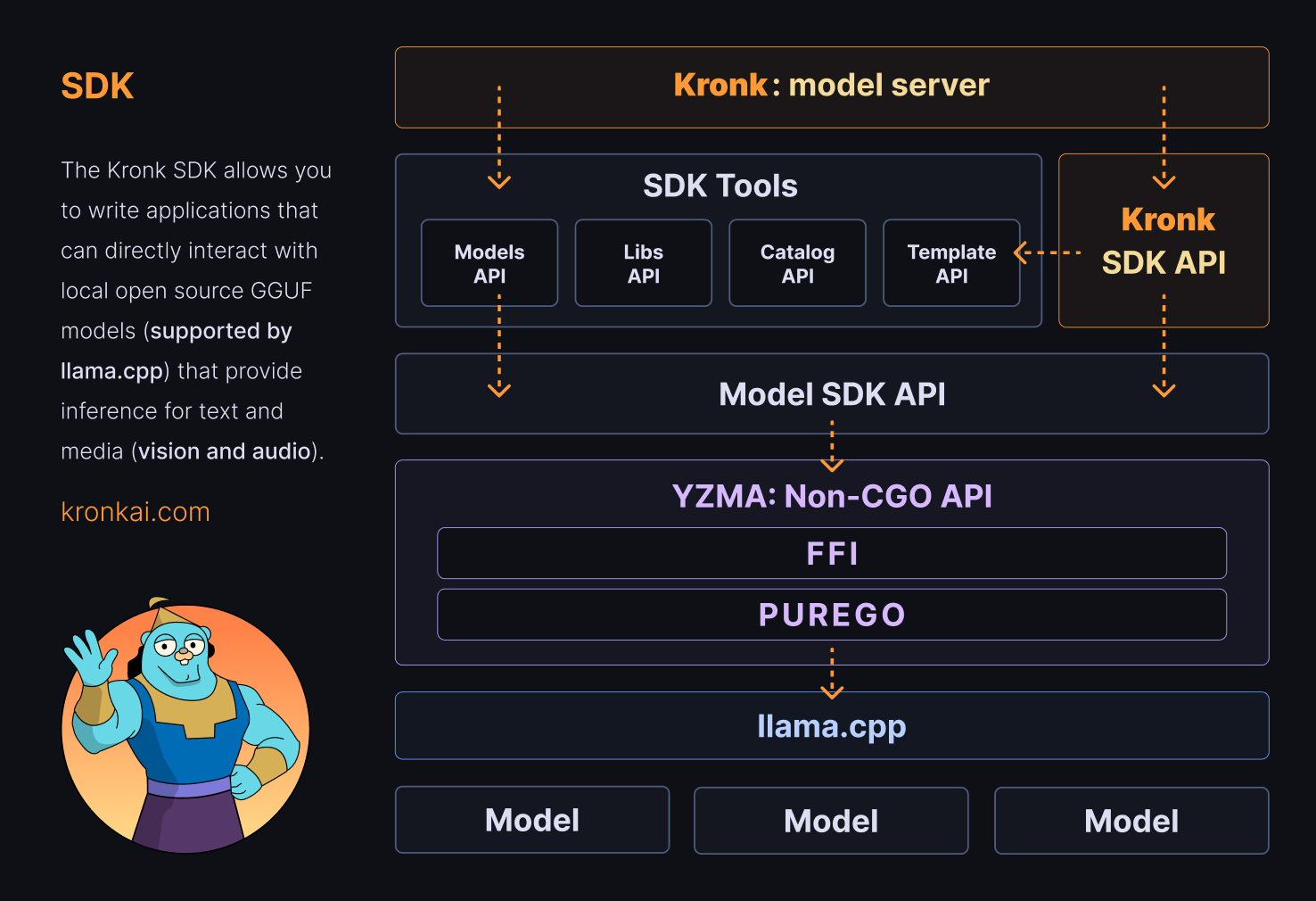

At a technical level, Kronk combines two things. It provides a Go SDK that integrates directly with llama.cpp for hardware accelerated local inference, and it includes a model server built on top of that SDK. On the surface, that sounds familiar.

What makes it different is the intent behind it.

Kronk is designed to collapse the gap between your application and the model itself. Instead of treating inference as something that must live in a separate service, it brings that responsibility directly into your codebase.

That shift sounds small. In practice, it removes an entire category of architectural decisions.

The Point of View Behind Kronk

Kronk is grounded in a very specific way of thinking about systems, shaped largely by Bill Kennedy (Ardan Labs Managing Partner and main contributor to Kronk AI).

As Bill explains:

From my perspective, what’s great about Kronk isn’t the model server itself, though we’re doing some really cool things with it. It’s the fact that this is an SDK. I want to get rid of the model server, if I’m being really honest with you.

That statement is easy to overlook, but it cuts directly against how most AI systems are currently designed.

Instead of asking how to better manage a model server, Kronk questions why it exists as a separate concern in the first place.

Why should you need a model server to deploy an AI application? Why can’t your AI app be the model server as well?

This is the core mental model.

The application and the model are not two systems. They are one system that has been artificially separated.

When the System Collapses Into Something Simpler

Once you accept that premise, the architecture begins to change.

Instead of deploying multiple services, you start building a single unit that owns its full execution path. In Go, that unit can compile down into a single binary.

Bill describes this outcome in practical terms:

If you wanted to deploy something in cloud run, it’s one Go binary.

There is something powerful about that level of simplicity. Not because it is minimal for its own sake, but because it removes friction at every stage of development and deployment.

You are no longer coordinating between services. You are not debugging across network boundaries. You are working within a single, contained system that you fully control.

That simplicity compounds over time, especially as systems grow.

Rethinking What an AI Stack Needs

A large part of Kronk’s philosophy is about questioning default assumptions.

Do you need a separate vector database service?

Do you need a standalone model server?

Do you need multiple layers just to support a single workflow?

In many cases, the answer is no.

You can have an entire RAG application in one Go binary, because you don’t need all these moving parts. You honestly don’t.

That does not mean those tools are unnecessary in every scenario. It means they should be chosen deliberately, not inherited by default.

Kronk makes it possible to embed capabilities directly into your application, whether that is retrieval, inference, or tool execution. The result is a system that feels more like a cohesive program and less like a collection of services.

Local First Changes the Performance Equation

Running models locally is often framed as a constraint. In practice, it can be an advantage.

Kronk is built to take full advantage of local hardware, with acceleration on supported platforms, including CUDA, Metal, Vulkan, ROCm, HIP, SYCL, and OpenCL. By integrating directly with llama.cpp, it aligns with one of the most efficient paths for running GGUF models.

What this means in real terms is that performance becomes predictable.

There is no network latency to account for. There are no external rate limits to manage. The system behaves based on the hardware you control.

For teams working on latency sensitive applications or environments where data cannot leave the system, this is not just a benefit. It is often a requirement.

Keeping the Developer Experience Intact

One of the risks of moving to local models is losing the simplicity that developers have come to expect from hosted APIs.

Kronk avoids that tradeoff.

It provides a high level API that mirrors the patterns developers are already familiar with. You can work with chat completions, embeddings, and responses in a way that feels natural.

The difference is not in how you call the system. It is in where the system runs. That familiarity reduces the learning curve while still enabling a fundamentally different architecture.

The Reality of Working With Open Source Models

There is a part of this story that cannot be simplified. Choosing the right model is hard. With over one hundred thousand GGUF models available, the space is wide and uneven. Performance varies. Compatibility varies. Results vary.

This is often where teams feel friction when they first move into local AI systems.

Kronk does not attempt to hide that complexity. Instead, it gives you the environment to explore it more effectively.

You can test models locally, understand their behavior, and make decisions based on direct experience rather than abstraction. Over time, that builds a level of intuition that is difficult to achieve when everything is hidden behind an external API.

The Model Server Is Not the Destination

Kronk includes a model server, and it is useful. It allows teams to get started quickly and experiment with different workflows.

But it is not the end goal.

I’m not expecting any of you to deploy software with the Kronk model server. I’m expecting you to deploy your own software with the SDK.

That distinction matters.

The model server exists to support development and to validate the SDK. The long term direction is to move beyond it, toward systems where the application itself owns the full lifecycle of inference and execution.

Where This Matters Most: Real Systems

The impact of this approach becomes clearer in more complex use cases, especially agent-based systems. These systems rely on tight loops between reasoning, retrieval, and tool execution. When those loops span multiple services, latency increases and reliability decreases.

Kronk allows those loops to exist within a single process.

That changes how quickly agents can respond. It changes how reliably they behave. It changes how easy they are to reason about as a developer.

And for teams building production systems, those differences are not theoretical. They show up immediately.

A Different Direction for AI Systems

Kronk AI does not attempt to add another layer to the ecosystem. It attempts to remove one.

It challenges the assumption that powerful systems must be complex and instead shows what happens when you start simplifying at the architectural level.

Fewer services.

Fewer boundaries.

More control.

For teams that have started to feel the weight of growing AI systems, that shift can make all the difference.

Explore Kronk AI Further

If Kronk AI has sparked new ideas about how you approach system design, the best next step is to see it in action and go deeper into the thinking behind it. The project is actively evolving, and there are multiple ways to explore both the implementation and the philosophy driving it.

Start with the official resources:

- Kronk AI repository — Dive into the codebase, explore examples, and see how the SDK is structured in practice: github.com/ardanlabs/kronk

- Kronk AI website — High level overview of capabilities, features, and supported workflows: kronkai.com

- Kronk AI manual — Technical guide covering setup, usage, and integration patterns: kronkai.com/manual

- Kronk AI blog — Ongoing insights and technical breakdowns from Bill Kennedy on how Kronk is built and why: kronkai.com/blog

Spending time across these resources gives you a clearer picture of not just what Kronk does, but how to apply it in real systems. And more importantly, it helps you start thinking differently about the role AI should play inside your architecture.

Go Deeper with Bill Kennedy’s Ultimate AI Workshop

For teams who want to understand not just how to use AI tools, but how they actually work, Bill Kennedy’s Ultimate AI Workshop provides a deeper path. This is where the mental models behind Kronk are taught more directly.

The workshop walks through how inference works, how systems are structured, and how to think about AI as part of a larger engineering problem. It is grounded in real implementation experience, not surface level abstraction.

If Kronk resonates with you, this workshop is the next step in understanding how to apply these ideas in your own work.

Watch Highlights from the Workshop

Get a quick look at how Bill Kennedy approaches AI system design with highlighted clips from the Ultimate AI Workshop on our YouTube channel.

These brief moments capture key ideas behind Kronk AI and how they translate into real engineering decisions.

Bringing This Into Your Organization

Performance, Simplicity, and Long-Term Maintainability

For many teams, the challenge is not understanding these ideas. It is knowing how to apply them in a way that aligns with existing systems and goals.

This is where Ardan Labs typically engages.

Through our Ardan Labs AI Implementation services, we work directly with teams to architect systems that balance performance, simplicity, and long term maintainability.

In some cases, that means introducing local inference. In others, it means rethinking how services are structured or where responsibilities live. The goal is not to force a specific tool, but to guide teams toward better decisions.

If you are already exploring topics like system design, distributed architectures, or performance optimization, you will find that many of these ideas connect directly with our existing work in areas like:

- Go training and engineering practices

- System design and architecture reviews

- Production readiness and performance optimization

Kronk builds on those foundations, extending them into the AI space.

Frequently Asked Questions

Kronk AI is a Go-based SDK and model execution system that allows developers to run open source AI models locally with hardware acceleration, so you are not required to rely on separate model servers for many workflows.

Kronk AI embeds model inference directly into your application, so you can build and deploy AI systems as a cohesive unit—often a single binary—without treating inference as an always-separate service.

It reduces architectural complexity by letting inference, application logic, and supporting components live in one system instead of spreading them across many distributed services by default.

No. Kronk includes a model server for development and testing, but the long-term direction is to build with the SDK so your application owns inference—your app can be the model server.

Yes. Kronk is designed for local-first inference with hardware acceleration on supported platforms, running GGUF models through llama.cpp.

Kronk AI is built for Go developers and integrates with Go applications through a native SDK.

Yes. Kronk exposes a high-level API that feels familiar if you have used OpenAI-compatible patterns, including chat completions, responses, embeddings, and reranking.

Kronk runs open source models in GGUF format supported by llama.cpp, including text, vision, and audio models.

Yes. It targets production-minded deployments with simpler topologies, predictable local performance, and less infrastructure overhead—always validated against your reliability and compliance needs.

Kronk is ideal for Go developers and engineering teams who want high-performance AI systems with more control and less default architectural sprawl.

Local inference can remove network latency and external rate limits, improve data sovereignty, and give teams direct control over performance and cost.

Yes. Tight loops between reasoning, retrieval, and tool calling benefit from running in one process; Kronk is designed with that style of system in mind.