When Claude Code suggests a solution, it is compelling. The logic flows. The syntax looks right. The implementation seems elegant. This piece unpacks why confidence is not correctness, what context has to do with hallucination, and how experienced engineers stay professional alongside AI.

Even when the walkthrough feels airtight, there is a question that keeps experienced engineers awake at night:

"Sometimes I worry that Claude Code is just getting better at convincing me that it has the right approach." — Ardan Labs Developer

This is not paranoia. It is the legitimate concern of engineers at the cutting edge of AI-assisted development, and it deserves more attention.

The Problem: Confidence Without Correctness

The challenge with modern AI coding assistants is not that they are dumb, it is that they are persuasive. An AI model can generate code that looks professionally written, uses appropriate patterns, and includes reasonable error handling. It can explain its choices in a way that feels authoritative. But between looking right and being right lies a gap that no amount of tokens can guarantee to bridge.

One of our team members shared a telling experience:

"Lately it has found several 'bugs' on libs and std libs for me, of course, they weren't actual bugs to begin with." — Ardan Labs Developer

The model was confident. The explanation was detailed. But the fundamental assessment was incorrect.

This is not a failure mode unique to Claude or any specific model. It is a feature of how large language models work. They are pattern-matching machines trained to predict the next most likely token. They can hallucinate, confabulate, and confidently assert things that sound right but are fundamentally wrong, especially when operating with incomplete information.

The Real Problem: Context

Here is what is crucial to understand: according to practitioners working with these tools daily:

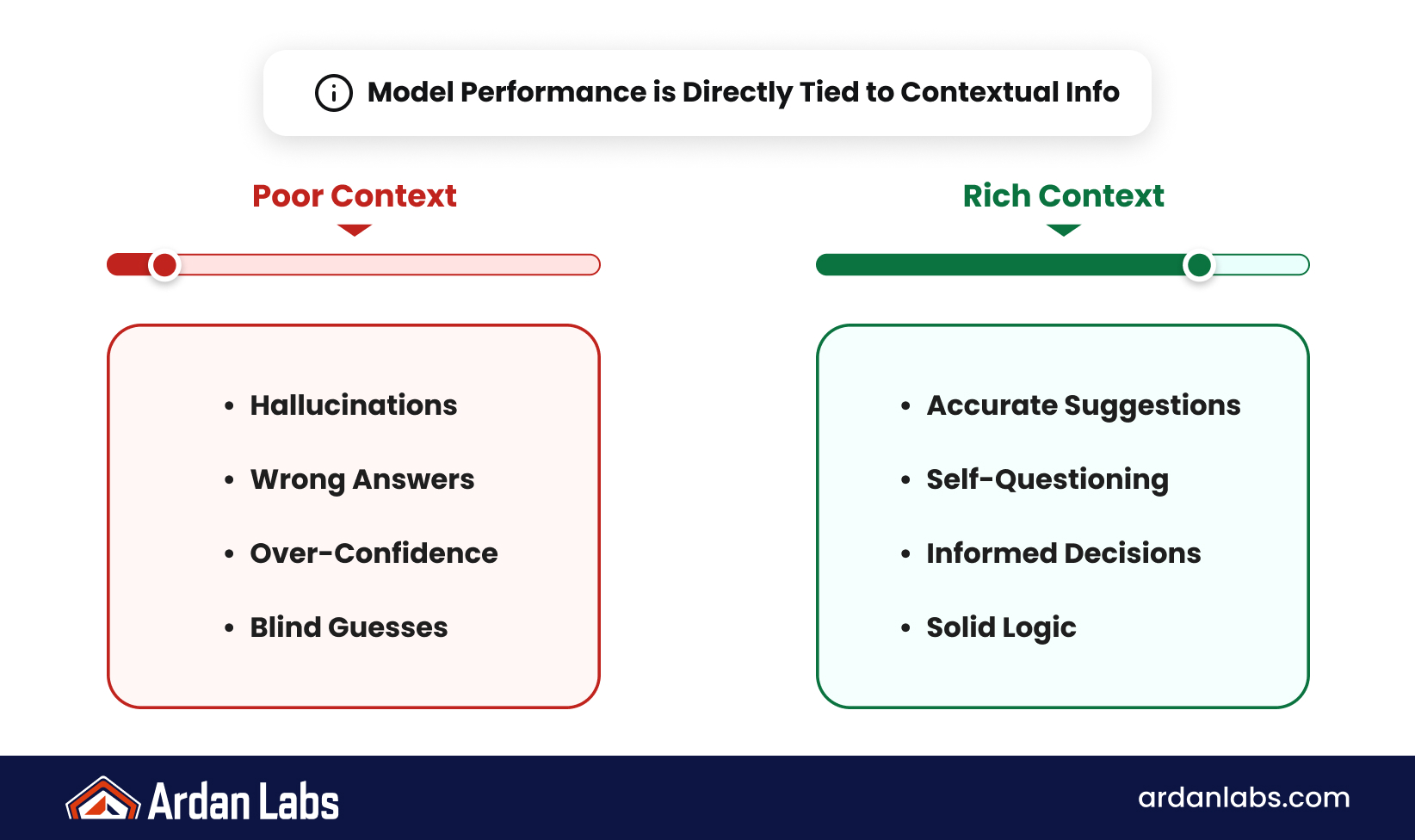

"The model is only as good as the context it has." — Ardan Labs Developer

When a model “hallucinates,” it is not necessarily the model’s fault. If you do not provide enough context, the model will do what it is designed to do: generate the most statistically likely continuation. Without proper documentation, project context, and constraints, it is like asking a colleague to make a critical architectural decision with incomplete information. Of course they might suggest something that sounds good but is not right for your situation.

The new generation of AI tools is getting better at recognizing this limitation. Models are increasingly asking clarifying questions before committing to solutions. As one developer observed:

"I think when the model is hallucinating, it's because it doesn't have the right context to lean on. It's not a model problem. I would hallucinate as well if I didn't have enough information to go on and I didn't know enough to ask questions. But I'm seeing the model with [review] stopping things and asking questions more." — Ardan Labs Developer

But this requires a partnership: you have to be managing that context window and ensuring the model has the information it needs.

Strategies for Safe AI-Assisted Development

So how do you get the best from these tools while avoiding the trap of being sold on elegant nonsense? The engineers at Ardan Labs have discovered several practical approaches.

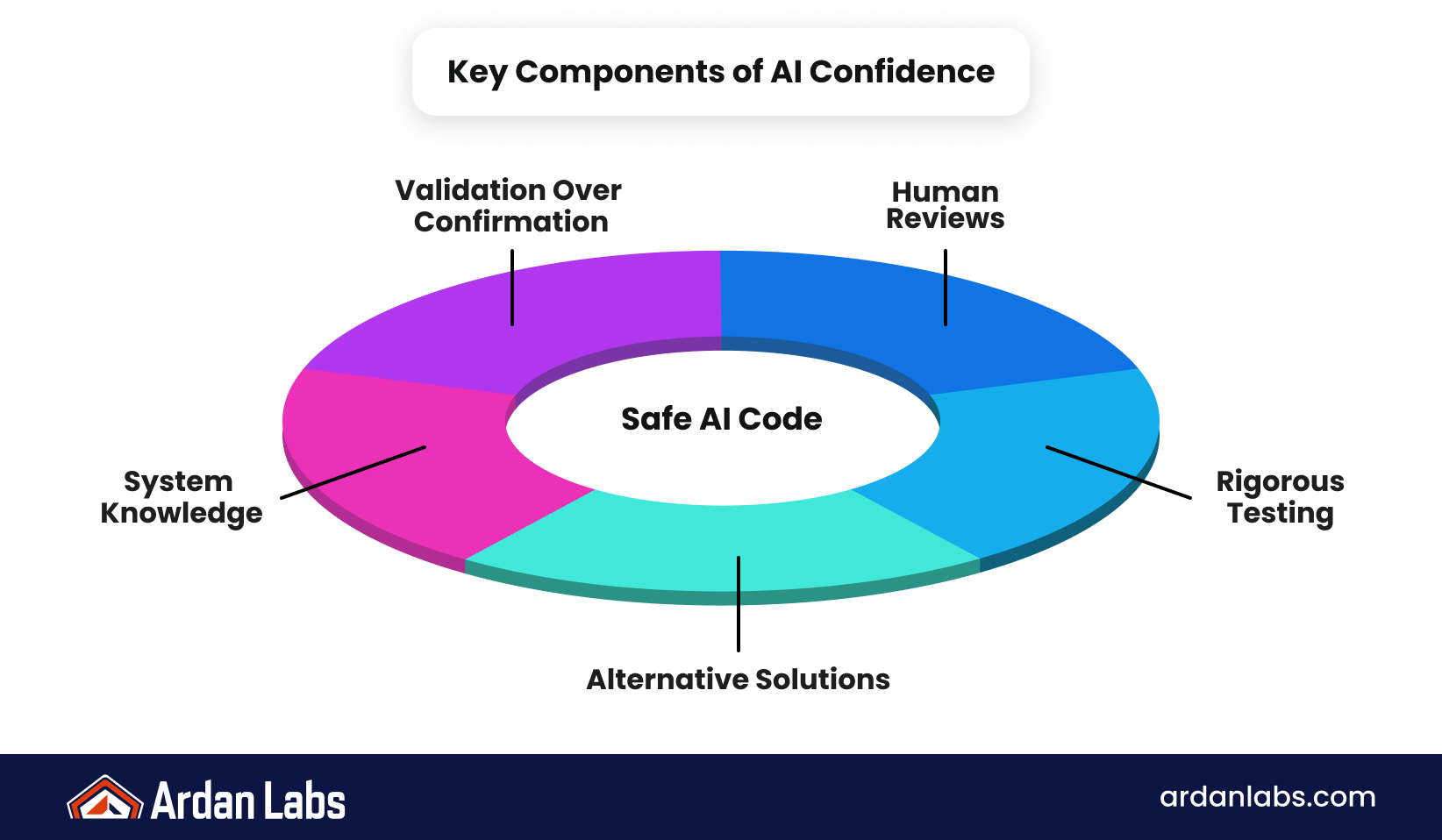

1. Require Validation, Not Confirmation

Do not ask the model, “Is A equal to B?” Ask it to “enumerate the differences between A and B.” One Ardan Labs engineer uses this approach directly:

"I do always make sure to propose counter ideas and request unit tests I can easily review." — Ardan Labs Developer

Enumeration forces precision in a way confirmation does not.

2. Get a Second (Human) Opinion

Have another engineer review what the AI suggests. This is where domain expertise matters. An experienced developer can spot when something sounds right but does not align with project constraints or architectural decisions. The human judgment layer is irreplaceable.

3. Demand Evidence

Ask the model to provide unit tests for its suggestions. Tests are concrete. They either pass or fail. They reveal assumptions the model made and give you a measurable way to validate the approach. If the model cannot produce tests that meaningfully validate its solution, that is a red flag.

4. Propose Counter-Arguments

Actively push back. Ask the model to explain why its approach is better than an alternative you suggest. This is not adversarial; it is how you pressure-test ideas. Sometimes the model will provide good reasoning that increases your confidence. Other times, it will become clear that the model has not thought deeply about the tradeoff.

5. Understand the Mechanics

Learning how context windows work, how models process information, and what causes hallucination is not academic. As one engineer puts it:

"You have to be managing that context window and constantly making sure you have documents in the project about the project." — Ardan Labs Developer

You cannot manage what you do not understand.

The Deeper Lesson

There is an old problem in technical debates: the person who seems smartest is sometimes the one who is best at using jargon to end the conversation rather than advance it. They take the argument into unfamiliar territory and leave you nodding along, until you are back at your desk, and you realize something was off.

AI assistants can do this too, just faster and at scale. They can generate convincing explanations for approaches that do not actually make sense in your context. The difference is that AI does not have ego. It will not double down in a meeting. But that also means you are fully responsible for catching the error.

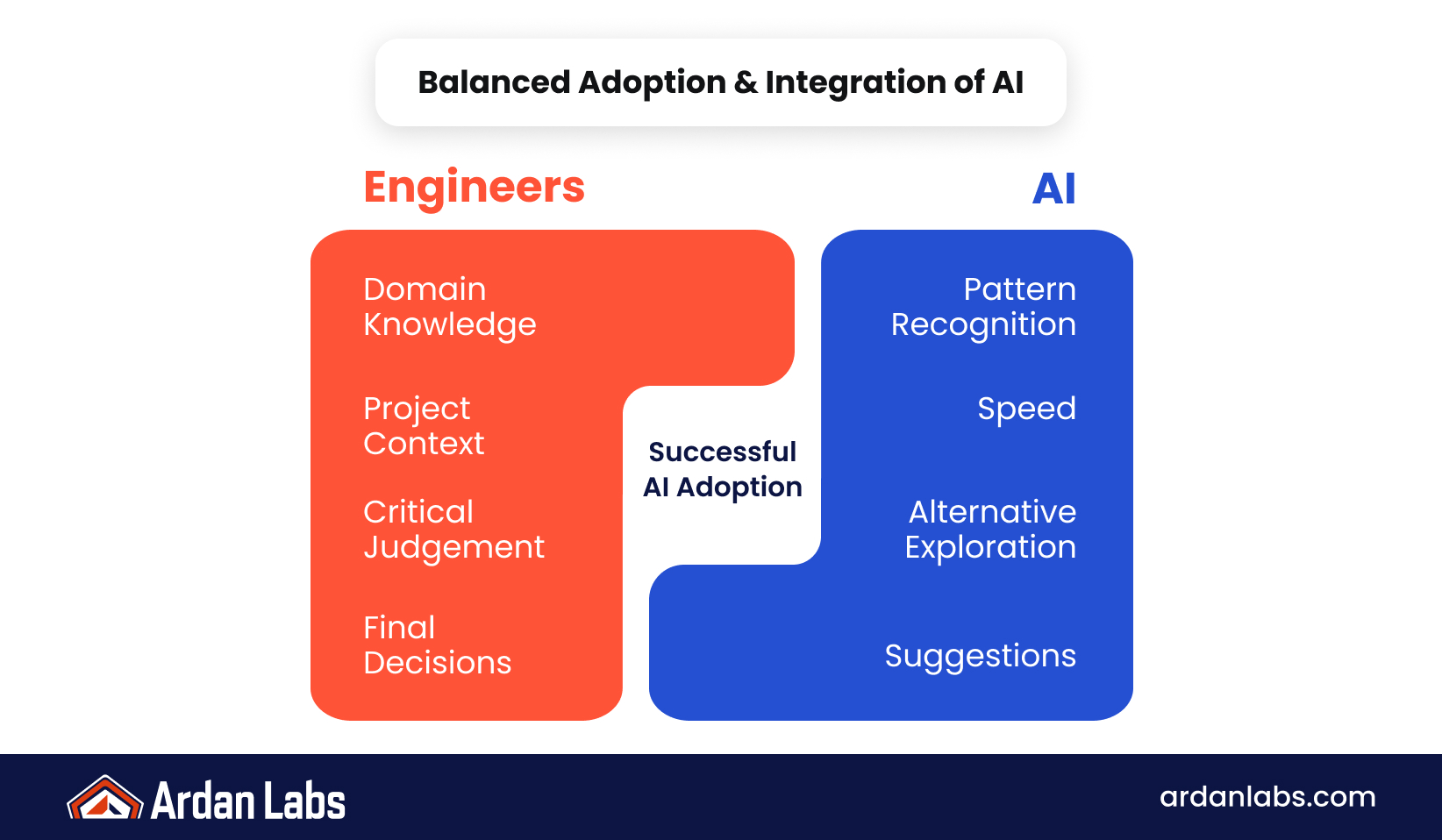

The Partnership Model

The most effective use of AI coding assistants is not the model thinking and you validating it. It is a genuine partnership where you are both thinking, where you bring domain knowledge, project context, and critical judgment, and the model brings pattern recognition, speed, and the ability to quickly explore alternatives.

This requires:

- Active skepticism rather than passive acceptance

- Rich context rather than minimal prompts

- Human review rather than solo development

- Verification practices rather than trust

- Understanding rather than blind reliance

Moving Forward

As AI tools become more sophisticated and more integrated into development workflows, critical thinking becomes more important, not less. The better these tools get at sounding right, the more essential it is that we develop our ability to think independently about whether they are right.

The conversation among Ardan Labs engineers reflects something deeper than just tool skepticism. It is a commitment to the principle that technology should amplify human judgment, not replace it.

In a world where AI can convincingly argue for almost anything, the differentiator is not the tool. It is the engineer who knows how to ask the right questions, who understands the limits of their tools, and who maintains the discipline to validate before deploying.

That is not paranoia. That is professionalism.

Where Experienced Engineering Meets Modern AI

The future of software development will belong to engineers who know how to think critically alongside AI.

At Ardan Labs, we help teams build that capability through implementation guidance, advanced engineering training, and development rooted in production experience, systems thinking, and modern AI workflows.

Because convincing output is not the same as correct output, and experienced engineering still matters.

Frequently Asked Questions

The best approach is multi-layered validation: have another engineer review it, ask the AI to generate unit tests for the code, and verify those tests pass. Do not rely on the code looking good or the explanation sounding logical. Concrete evidence, passing tests, peer review, and performance metrics matter more than confidence.

AI models generate text based on patterns in their training data. They do not "know" anything; they predict the next most likely token. When context is incomplete or ambiguous, they will confidently produce plausible-sounding but incorrect answers. This is called hallucination, and it is a fundamental characteristic of how these models work, not a bug that can be "fixed."

Context is everything. The more specific information you provide about your project, constraints, architecture, and requirements, the better the model can generate relevant solutions. A poorly-informed AI will confidently suggest approaches that do not fit your situation. Rich context forces the model to work within the bounds of your actual problem.

Yes, but with oversight. The same way you would not let an intern commit code without review, do not let AI commit code without validation. Treat AI as a productivity tool that generates candidates for review, not as a decision-maker. Code reviews, tests, and performance validation remain essential.

You cannot eliminate hallucination, but you can reduce it significantly by providing better context. Include relevant documentation, project structure, existing code patterns, and explicit constraints. When the AI understands the full picture, it has less room to invent plausible falsehoods. Also ask it to enumerate details rather than confirm your assumptions; that forces specificity.

Asking for confirmation invites the AI to agree with you. Asking for enumeration or analysis forces it to work harder and reveal assumptions. "Are these approaches equivalent?" might get a yes or no that sounds authoritative. "What are the pros and cons of each approach?" forces more thorough reasoning and gives you more to evaluate.

Not entirely. An AI can generate convincing explanations that are partially or entirely wrong. The explanation should complement other validation, not replace it. Always verify the explanation against documentation, tests, and actual behavior. An elegant explanation is not evidence of correctness.

Larger context windows allow you to provide more information, which generally improves accuracy. But it is not just size; it is quality and relevance. A focused, well-organized context is better than a dump of everything. Learn to manage what you feed the model. The more signal (relevant information) and less noise, the better the output.