Machine Learning Consulting

Looking to implement practical artificial intelligence & machine learning in your organization? Partner with us to integrate predictive analytics into your business.

Implementing Practical Artificial Intelligence

Almost every company is considering, or is in the process of, integrating artificial intelligence (AI), machine learning (ML), or predictive analytics into their business. Huge value can be gained from leveraging your organization’s existing data to optimize logistics, make recommendations to users, or predict anomalous behavior. AI/ML models have proven to be well suited to these and many other problems.

Kicking off an AI/ML initiative can be an intimidating process, because, among other things.

- Your more traditional software engineers are unfamiliar with the theoretical foundations, languages and frameworks utilized in AI applications.

- Your infrastructure team(s) do not understand where and how AI applications can or should interface with existing systems.

- You are not sure if the AI methods that are much hyped on social media could actually bring value based on your data and goals

Bottom Line

How can we take the hype of AI/ML and make it practical in a real life business with existing teams, infrastructure, and processes? The answer to this question is what I would call Practical AI, and in the words of my good friend Chris Benson (a chief scientist at Honeywell):

You can, and likely should, be considering how AI will be integrated into your engineering organization. However, when starting this sort of effort, you should follow at least some of the guidelines above to ensure that you are integrating Practical Artificial Intelligence, and not just wasting money on intellectually interesting experiments.

Creating practical Artificial Intelligence / Machine Learning Model

The recent accomplishments in AI research are astounding! They now have models that can learn to play complex games without any prior knowledge and models that can control driverless cars. Yet, most businesses don't need to play board games or drive autonomous vehicles, so what defines a practical AI/ML model in a more realistic business setting?

A practical AI/ML model is:

- Capable of being trained on and utilized with the data that you have available.

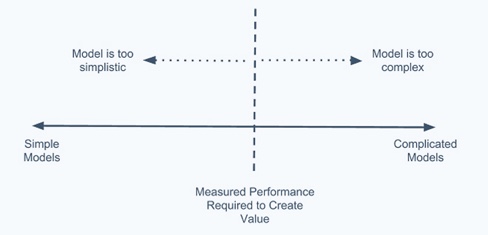

- Complex enough to create real value, but simple enough to be reproducible and debuggable.

- Explainable to or understood by more than just one individual within an organization.

Although certain models and frameworks, such as convolutional neural networks implemented in Tensorflow, are capable of detecting objects within images, your company might not even have image data to process. You need to identify both (i) what relevant data is available or can be made available to models, and (ii) what mission critical information or predictions would create real value. Item (ii) can often be accomplished before hiring some fancy AI Ph.D. who is only interested in working on a small subset of problems. Once you know what mission critical information/predictions you want, you can then start researching what AI methods and expertise you will need to accomplish your use case specific end goals.

The AI methods and experts that you task with realizing these end goals should also know that you value simplicity and debuggability. You should try to establish evaluation metrics or measures of value at the onset of a project, such that you end up with the most simple model that can realize these metrics and avoid any more complication. If you only need to detect anomalous user transactions with 90% accuracy, there is no need to spend months of effort creating an extremely complex model that reaches 99.99% accuracy.

You can, and likely should, be considering how AI will be integrated into your engineering organization. However, when starting this sort of effort, you should follow at least some of the guidelines above to ensure that you are integrating Practical Artificial Intelligence, and not just wasting money on intellectually interesting experiments.

Creating deployable Artificial Intelligence software components

Even if your engineers and scientists are able to create fancy AI models that predict useful things, this does not mean that those AI models are going to create real value in your organization. If you cannot deploy those models as software components in your infrastructure, they are merely an interesting intellectual exercise.

Considerations around how AI workflows will interact with your existing data storage and software applications should be an initial part of project planning, not an afterthought. If you have applications, for example, already deployed as containers on Kubernetes, you should consider how you might integrate your AI workflows in a containerized way on top of Kubernetes. If your team has expertise in Go and Python, but not R or Julia, you might consider writing your AI workflows using Go and Python from the beginning. Decisions like these can ease the burden of deployment both for data scientists and for the rest of an engineering organization.

One way to accomplish this goal of creating deployment models, is by giving the team members developing the AI models some ownership over the data pipeline that will interact with their models. If these individuals are developing their workflows with production in mind and testing them on realistic sample data and environments, you are much more likely to actually deploy their applications. Although hiring an individual with experience in AI research might seem like a great idea, it may be more valuable for you to seek out individuals with expertise in data infrastructure that can also learn enough to integrate AI where applicable.

“Practical AI is about creating models that can be wrapped into software components for deployment into products and services”

From the Lab

Where ideas get tested and shared. From the Lab is your inside look at the tools, thinking, and tech powering our work in Go, Rust, and Kubernetes. Discover our technical blogs, engineering insights, and YouTube videos created to support the developer community.

Explore our content:

RAG in Go: A Vulnerability Research Tool

Updated on

Miki Tebeka

Staff Augmentation in the Age of AI: Scaling Systems Without Architectural Drift

Updated on

Ardan Labs